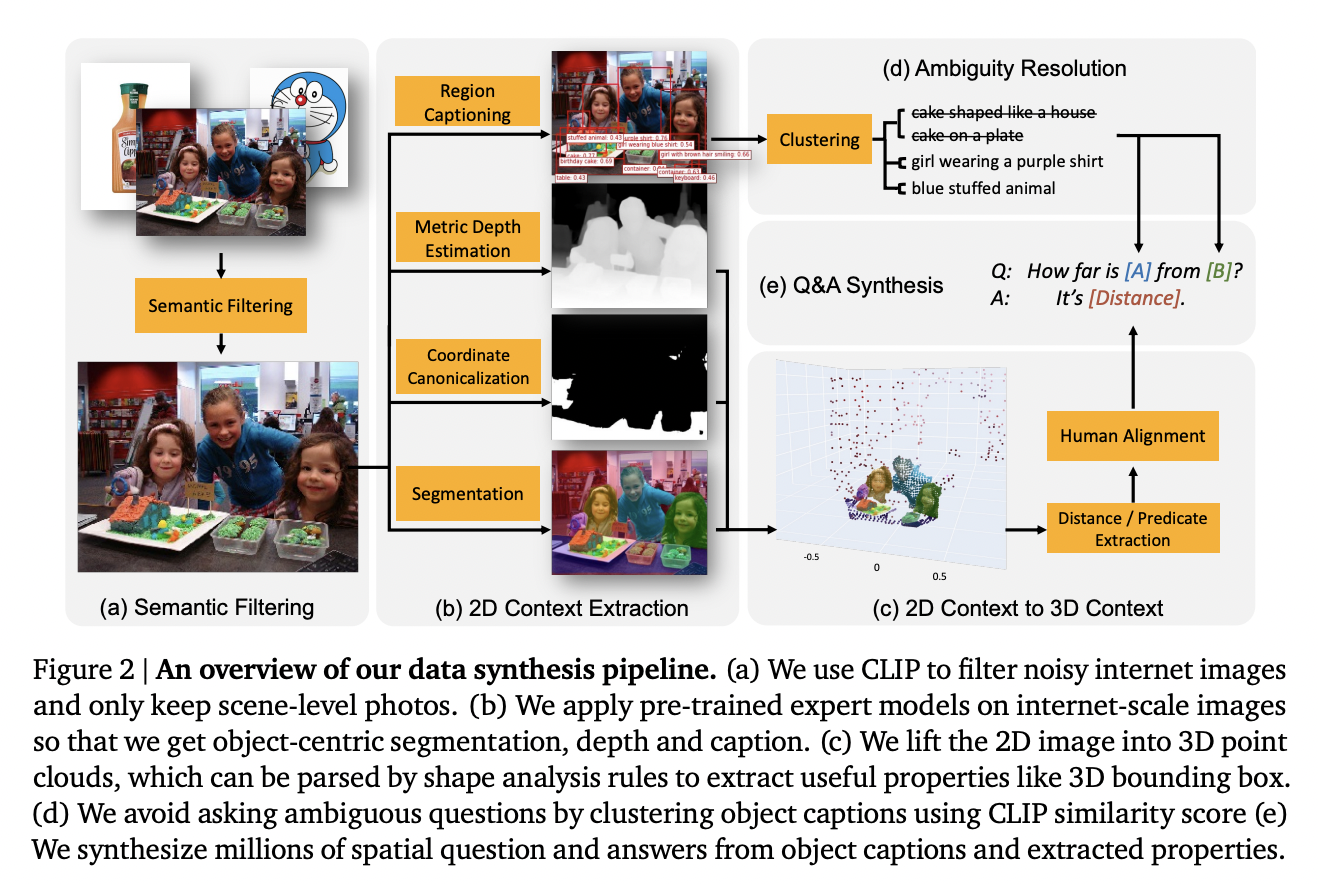

Vision-language models (VLMs) are increasingly prevalent, offering substantial advancements in AI-driven tasks. However, one of the most significant limitations of these advanced models, including prominent ones like GPT-4V, is their constrained spatial reasoning capabilities. Spatial reasoning involves understanding objects’ positions in three-dimensional space and their spatial relationships with one another. This limitation is particularly pronounced…